In Part 1, we explain the basics and prerrequisites to work with Terraform.

In Part 2, we created our first Azure resource.

On this post we will create an Azure SQL Database and configure it so we can access it right away.

Example 2: Deploy and configure an Azure SQL database

To create an Azure SQL database, we need at least the following:

- Resource group (we already have it from the previous example)

- Azure SQL Server

- Azure SQL database

- At least one firewall rule

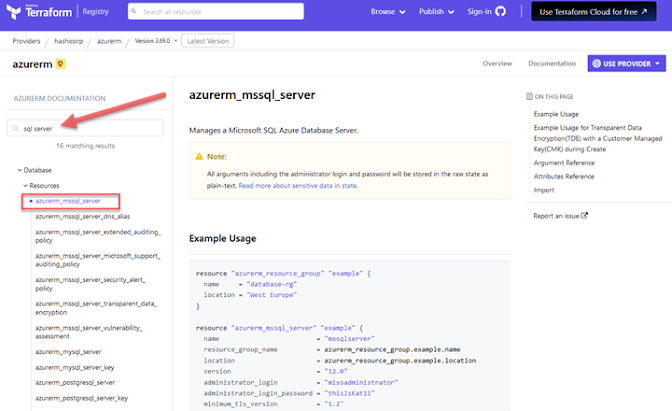

We can obtain code snippets on each resource from the Terraform provider documentation we checked on the previous example: https://registry.terraform.io/providers/hashicorp/azurerm/latest/docs

We proceed to add each component on the main.tf file (do not delete the previous code we have there)

At the end we should have something like this (just check resource names and parameters to match what you want.)

terraform {

required_providers {

azurerm = {

source = "hashicorp/azurerm"

version = "3.69.0"

}

}

}

provider "azurerm" {

# Configuration options

features {}

}

resource "azurerm_resource_group" "MyRG" {

name = "RG_TF_Tests"

location = "East US"

tags = {

environment = "Azure Resource tests"

}

}

#Azure SQL server

resource "azurerm_mssql_server" "sqlserver" {

name = "eduardopivaral-tf"

resource_group_name = azurerm_resource_group.MyRG.name

location = azurerm_resource_group.MyRG.location

version = "12.0"

# Note that the user and password is plain text, for this case we use variables,

# but that is out of scope for this post, we will discuss this on next posts.

administrator_login = "Gato"

administrator_login_password = "-n0meAcuerd0-"

#we add our account as administrator of the instance

azuread_administrator {

login_username = "epivaral@studyyourdata.com"

object_id = "<check object ID for the account in Azure Portal>"

}

}

# Azure SQL Database

resource "azurerm_mssql_database" "sqldb" {

name = "demoData"

server_id = azurerm_mssql_server.sqlserver.id

collation = "SQL_Latin1_General_CP1_CI_AS"

sku_name = "Basic" #we use basic tier

storage_account_type = "Local" #local redundancy storage

tags = {

description = "Part 1 - just the empty resource"

}

}

#firewall rule to allow us access to it

resource "azurerm_mssql_firewall_rule" "MyLaptopRule" {

name = "MyLaptopRule"

server_id = azurerm_mssql_server.sqlserver.id

start_ip_address = "181.209.256.300" #This IP does not exist, you should put your IP or range

end_ip_address = "181.209.256.300"

}

We execute Terraform plan:

Terraform plan

Notice how this time three resources will be added, and no action will be taken for the resource group.

We execute terraform apply -auto-approve

Terraform apply -auto-approve

If everything is ok, we should see the process completing successfully:

To validate it, navigate to Azure portal (or using SSMS) and validate you can access the database:

Since we also included a firewall rule for our laptop, we should access without issues:

Destroying our infrastructure

This is a demo and I do not want to incur on additional costs, so I will bring down all the infrastructure. In a real-world scenario probably, you will just remove individual resources instead of everything, but this is how we delete everything:

Terraform destroy

Same as apply, we can use the -auto-approve flag, but I do not recommend it for destroy activities.

If you want to destroy just one resource (like in a real-word scenario), use the -target='resource.name' flag.

You can validate resources that have been removed by checking in Azure portal.

As you noticed, this approach has a lot of information to digest, and IaC is just used to provision the underlying infrastructure in an automated and repeatable way.

To create database objects and populate tables with data, that is another part of the CI/CD pipeline, there are other tools for that, which we can discuss in the next posts.

As a suggested practice, you can try to deploy different resources to other providers like an AWS S3 bucket (we will make another posts for that as well).

Stay in touch for next articles where we will discuss sourcing and Terraform Cloud, and how to secure sensitive data.